Changelog

We're constantly working on new features and improvements. Here's what's new with Langdock.

Platform Speed Improvements

We just shipped massive speed improvements across our platform! While we are continuously working on model speed, you'll notice everything else is running much faster now. Plus, we released some much-requested improvements on our chat input and API.

- Embedding Models in API: The OpenAI ada-002 embedding model is now available through our API and can be used for personalizing, recommending, and searching content

- Knowledge Folders + Assistants API: Knowledge folders are now fully compatible with the assistants API for seamless integration

- Character Count Indicator: The text input field now provides visual feedback, which shows the character count and turns red when exceeding limits

Claude 3.7 Sonnet, o3 Mini and Gemini 2.0

We've just added three powerful new models to Langdock: Claude 3.7 Sonnet, OpenAI's o3 Mini and Gemini 2.0 Flash.

Claude 3.7 Sonnet

Claude 3.7 Sonnet is the successor to 3.5, one of the most used models in our user base. The previous 3.5 version is used already by many users for writing tasks, such as emails or translations and for coding.

The upgrade of the new model version is a dual-mode capability, which were added as two separate models

- The normal mode allows users to either use it as a regular LLM and immediately create an answer for simpler tasks (like email generation or translating a text).

- The reasoning mode allows the model to self-reflect before answering, to provide a better, deeper answer for complex problems (like strategy, maths or science).

We have added the modes as two separate models (Claude 3.7 Sonnet and Claude 3.7 Reasoning).

o3 Mini

OpenAI's o3 Mini is the latest and most-efficient model of OpenAI's reasoning series.

Reasoning models, like o3 Mini, o1, r1 from DeepSeek or the Claude 3.7 Sonnet model mentioned above use chain-of-thought thinking to split a task into several steps. This makes them useful for complex tasks, like maths, physics, complex instructions, coding or complex strategic tasks.

o3 Mini is the latest and most efficient model of OpenAl's reasoning series. o1 is the broader knowledge reasoning model, and o3-mini is faster compared to the previous one, balancing speed and accuracy. As o3 Mini allows for control over its reasoning efforts, we have added the standard mode as well as a high-effort reasoning mode as two separate models (o3 Mini and o3 Mini High).

Gemini 2.0 Flash

We also added the new Gemini 2.0 Flash model, which is now available in the EU as well. The Flash model from the previous 1.5 Gemini generation was the faster, smaller model compared to the larger and more advanced Gemini 1.5 Pro. The new Gemini 2.0 Flash outperforms Gemini 1.5 Pro on key benchmarks and is twice as fast.

Assistant Forms

We are bringing a new way to interact with assistants in Langdock: Assistant forms. When building an assistant, editors can now choose to use the new form input method, where they can define the input fields shown to users.

You can build an interface to structure the inputs users need to enter to receive high-quality results, similar to survey forms. When users use an assistant using the new input method, they will be presented with the form the editor built. You can use inputs you know from other tools, like:

- Single-line text

- Checkboxes

- File upload

- Single-select options

- Number

- Date

This gives assistant creators more flexibility when creating assistants and allows them to tailor the input structure to your specific needs, while making it easier for other users to use the assistant.

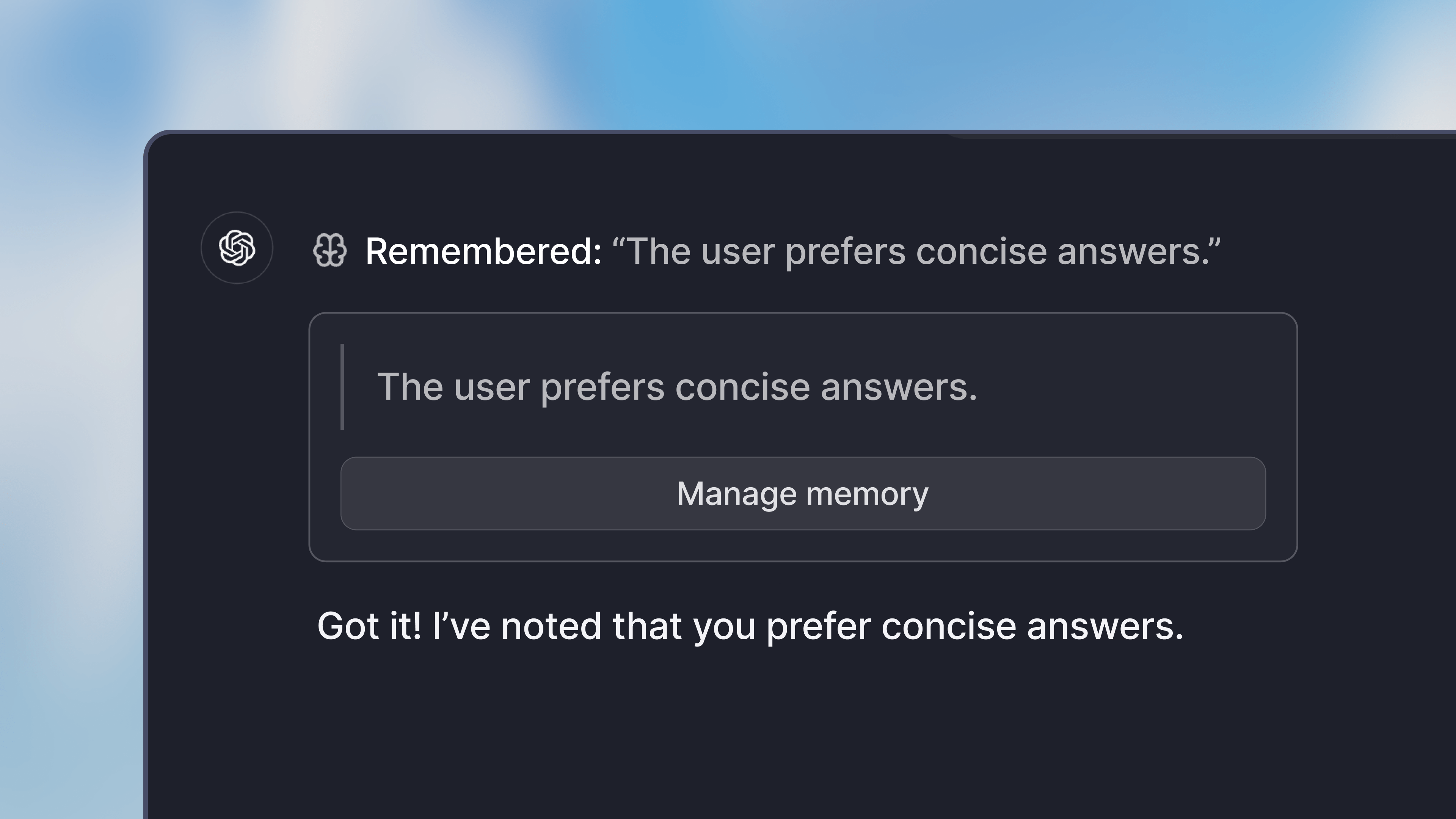

Memory

Memory offers deeper personal customization of the model responses, by saving information from past interactions in the application.

When using memory, you can tell the model to remember certain information about you, your work or any preferences you have. It will then save the information in the application. For example, you could have it:

- Remember certain details about your job

- Share a preference for a specific style of writing

- Remember your name and other personal details

By default, Memory is disabled. To use it, head over to the preferences in your settings. There you can enable chat memory in the capabilities section.

All memories are stored in your account, and are available to you in all your chats (not assistant chats). They are not accessible by others in your workspace.

- OpenAI o3 mini: We added support for the new OpenAI o3 mini model. Admins can configure it in the settings. We consume the model from Microsoft Azure and it is available as a global deployment.

- Increasing password security requirements: We increased the minimum number of characters a password needs to have. We recommend using a password manager, the magic email link login or login through SSO.

- Langfuse integration: We added a Langfuse integration, which allows technical users to assess the performance of assistants.

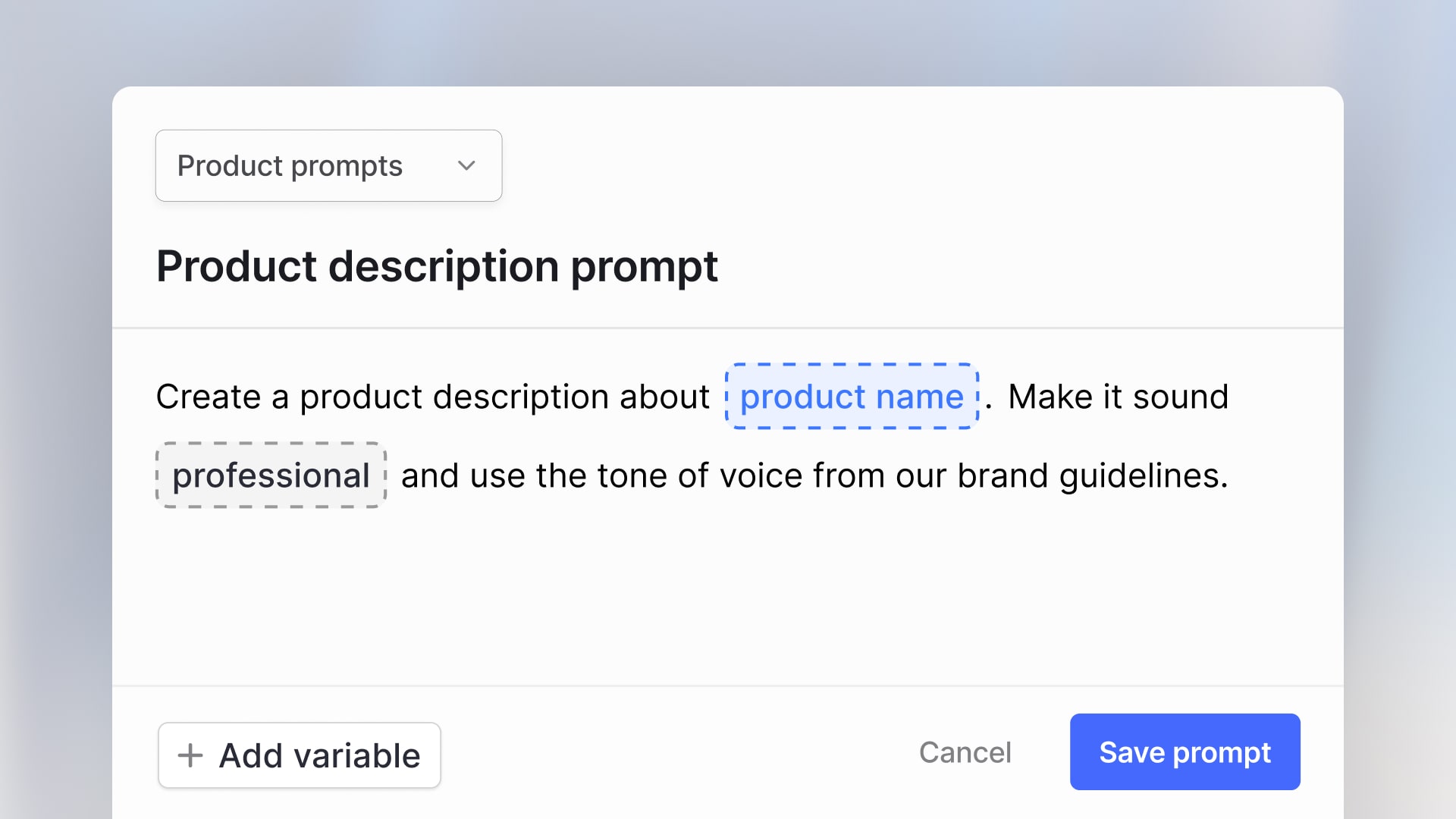

- Prompt variables: We added support for using the same input variable several times in the prompt. You fill out one variable and it gets copied to all occurrences of the same variable.

DeepSeek-R1

We've added support for the new R1 model from the Chinese AI company DeepSeek. R1 has been receiving a lot of attention in the media recently for its strong performance. The model rivals OpenAI's o1-series and is open-sourced for commercial use.

The R1 model is available in multiple versions. We are self-hosting the 32B version of the model on our own servers in the EU and consume the full 671B version from Microsoft Azure in the US. Since the model is still early and focuses on reasoning, we have deactivated tools like document upload, web search and data analysis for now.

Admins can enable the models in the settings.

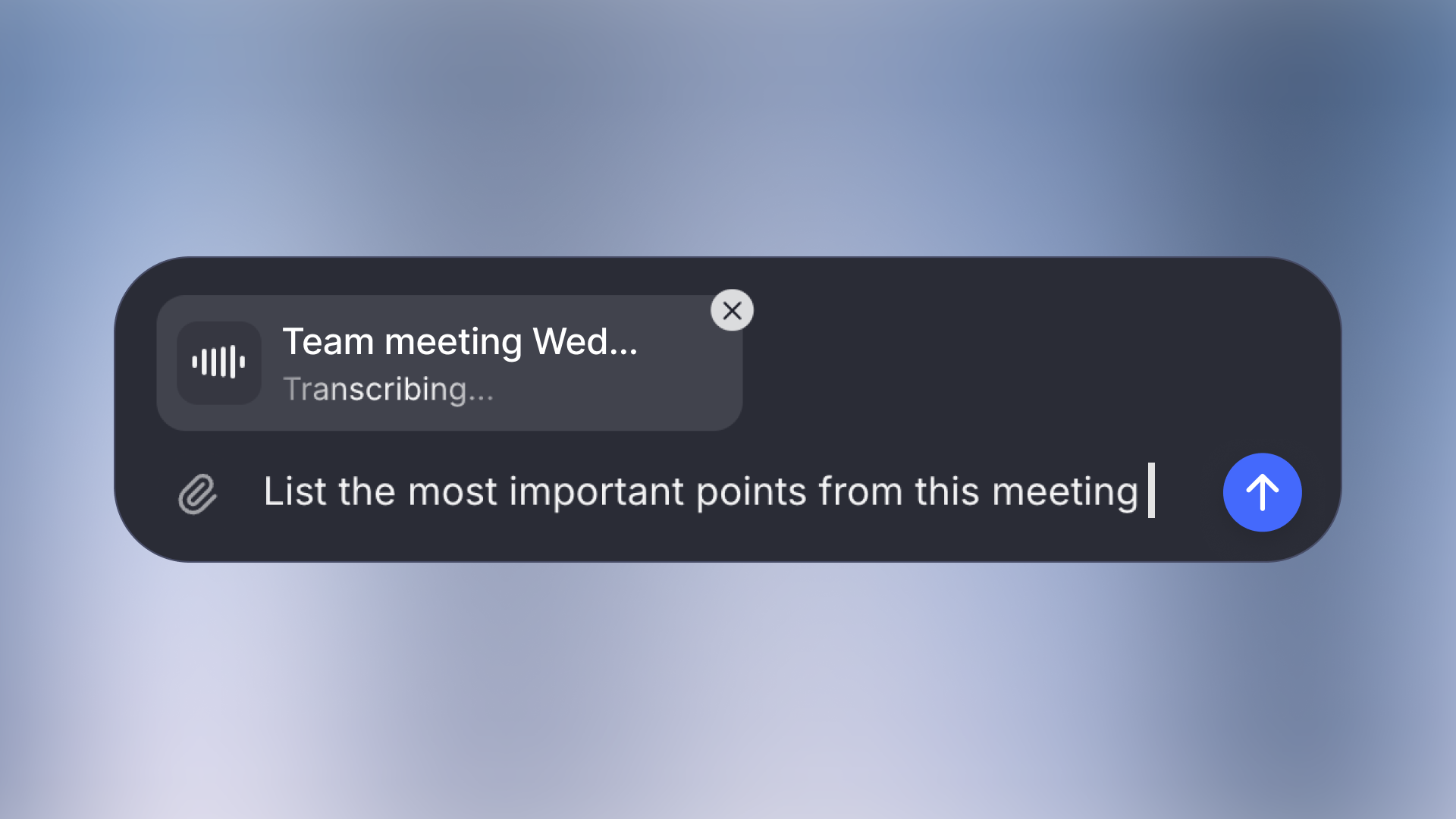

Audio & video upload in chat

We're excited to announce that you can now work with audio and video files in the chat.

Upload your recordings (up to 200MB) and our system will automatically transcribe them, allowing you to have natural conversations about the content.

You can work with all common formats including MP4, MP3, WAV, and MPEG files. Whether you need to review a team meeting, analyze a client call, or process a voice memo, simply upload your file and start asking questions about its content.

- Llama 3.3 model: We added the newer Llama 3.3 70B model to the platform

- OpenAI o1: We added the o1 model from OpenAI to the platform. However, it is only available as a global deployment, which means that servers could potentially be outside of the EU. The model is turned off by default, but admins can activate it in the settings.

- Amazon Nova models: We added the Nova models from Amazon in the model settings. However, they are only available in the US at this point.

- Gemini as backbone model: Admins can set up Gemini models as a backbone model now. The backbone model defines tasks in the background for some models.

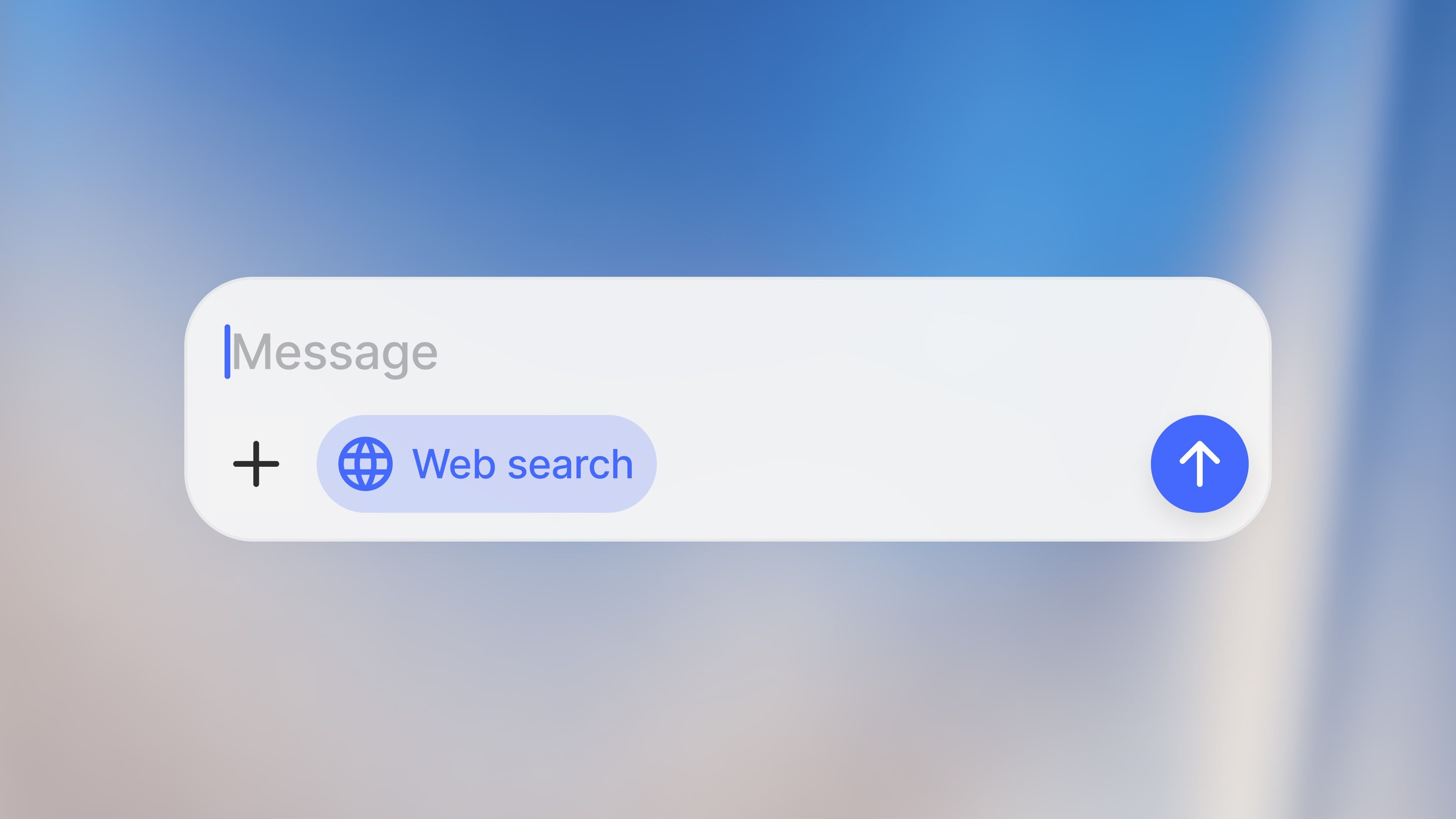

Web search mode

Langdock now offers an enhanced web search mode, providing quick and current answers along with links to relevant internal sources from the internet.

If web search is enabled for your workspace, you can now turn it on in the newly redesigned chat input bar. This will force the model to search the web for up-to-date news and information regarding your query.

- Sidebar resizing: You can now resize the sidebar to your preference.

- Response copying: Users can now copy responses while they are still generating.

- Spending limits in API: We now support setting spending limits for API usage in the workspace settings.

- Prompt library: Adjusted prompt library layout to show more content from the saved prompts for a better experience.

- Long chat performance: We optimized the rendering of chat messages, resulting in smoother performance in bigger chats.

- Data Analyst: We've made some larger improvements to the Data Analyst, making it more reliable.

- Upload of Python, JS, HTML, CSS, PHP: You can now upload more file types to Langdock.

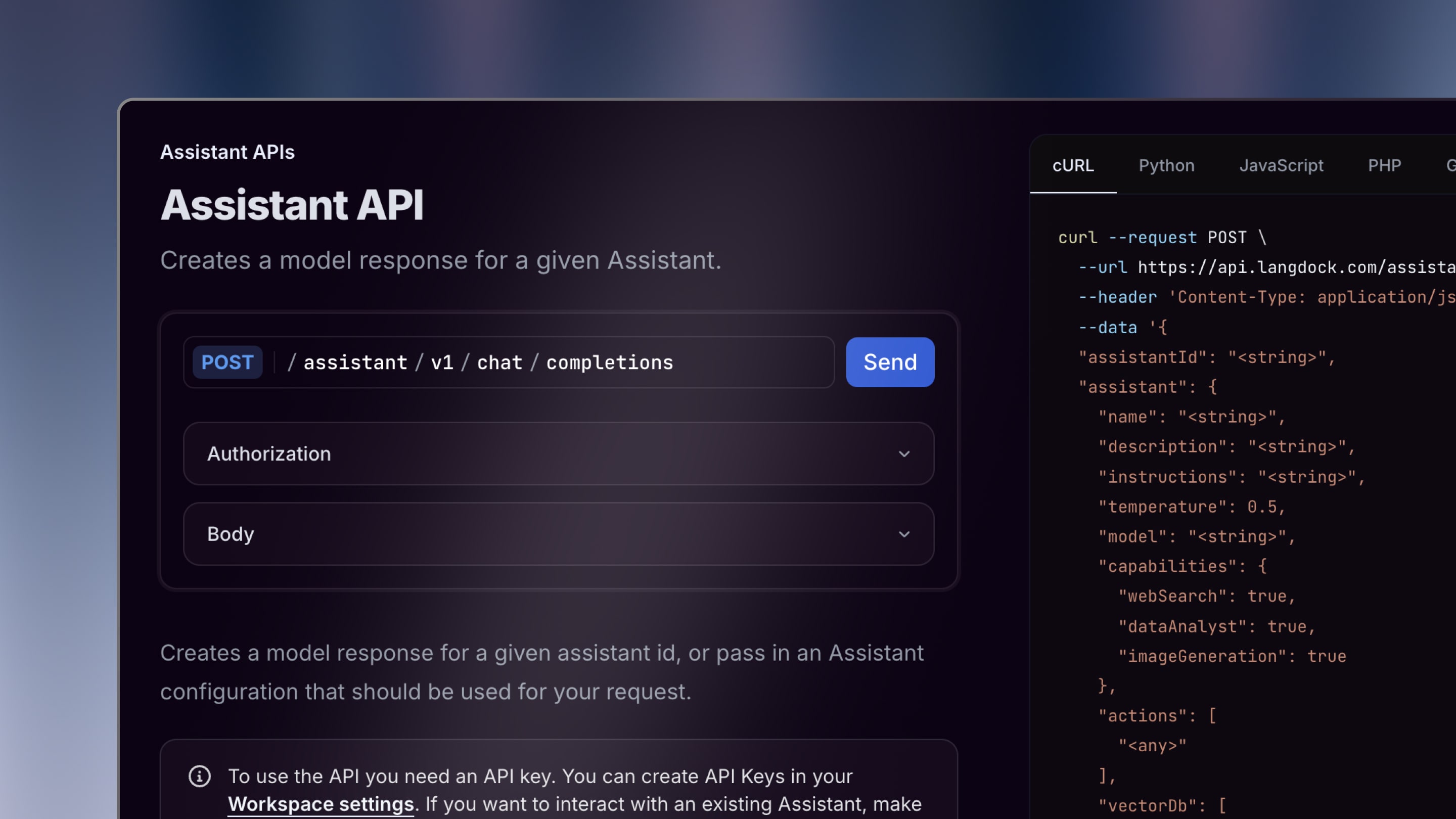

Assistant API

We launched the API for assistants. You can now access assistants, including attached knowledge and connected tools through an API.

To enable an assistant to be accessible through the API, admins need to create an API key in the API settings. Afterward, you can share the assistant with the API by inviting the key like a normal workspace member.

After configuring the API in your workflow (here are our docs), you can then send messages to the assistant through the API. The API also includes structured output and document upload.

- Formatting of copied output: We improved the formatting of output when you copy a response into a different tool.

- Share chats: When a user clicks on 'create link' in the assistant sharing menu, the URL is automatically copied into the clipboard.

- Data analyst: We improved the data analyst and the handling of CSVs, PDFs and Excel Files

- Pinecone as Vector Database: We added support for Pinecone as a vector database.

Command Bar (Cmd+K)

You can now navigate Langdock and search chats directly from your keyboard with the new command bar feature. This allows for quick and easy access to the information you need, right at your fingertips.

Pressing Cmd + K on your keyboard (for Windows Ctrl + K) opens a menu to quickly perform different operations. Here are a few examples:

- Search through all your chats

- Search a specific assistant

- Quickly change settings, like switching to dark mode, opening the documentation, the changelog, or the support chat

We also added a search button in the top left corner which opens the command bar.

There are also new and updated shortcuts:

- Open a new chat: Cmd/Ctrl + Shift + O

- Open/close the sidebar: Cmd/Ctrl + Shift + S

- Copy the last response: Cmd/Ctrl + Shift + C

We hope these improvements make you even more productive when using Langdock.

- Long text in variables: The display of long text in variables has been improved.

- Actions improvement: Added support for multiple headers in actions.

- Redirect from sharing assistant page: When sharing an assistant, users were redirected to the assistant overview. We improved the behavior, so users are not redirected and stay in the assistant editor.

Prompt Variables

You can now incorporate variables directly into your prompts and create dynamic templates that can be easily reused across different contexts.

When creating a new prompt in the prompt library, wrap a word with {{ and }} or click on the variable button at the bottom to make it a variable. When using the prompt later, users can quickly fill out the variables in order to customize the prompt to their needs.

This helps to easily use a prompt in different contexts without leaving your keyboard or to make it easier for others to use the prompt when you share it.

You can find more details in our section about the prompt library in our documentation.

- Web search: Improved web search speed and display of sources.

- Claude 3.5 Sonnet: Claude 3.5 Sonnet tended to go to the web more often than other models. We improved this behavior.

- Assistant feedback: This improvement allows users to submit feedback for assistants without free-text inputs.

- API improvement: We made our API compatible with n8n.

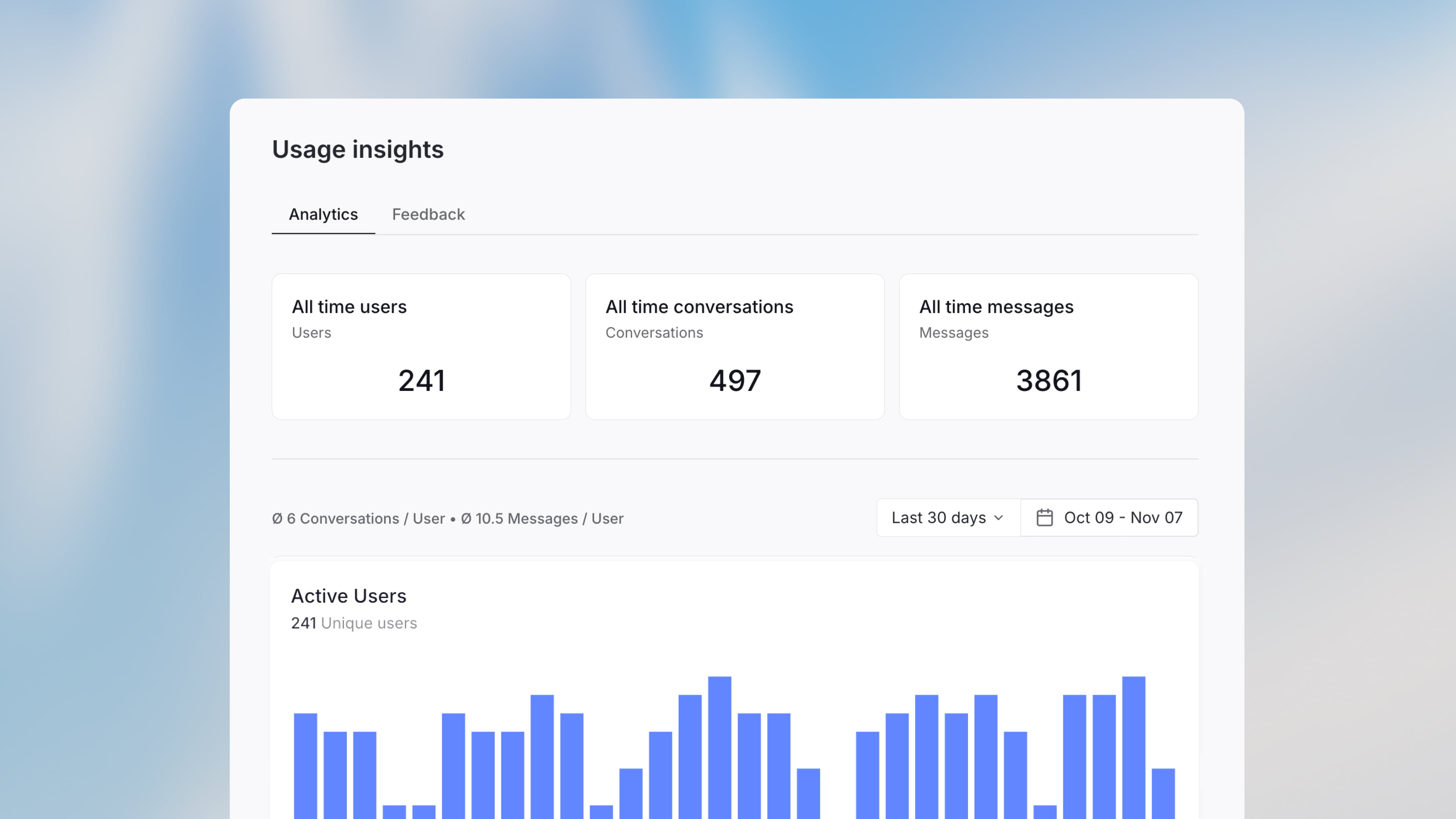

Assistant Analytics

Gain valuable insights into how your assistants are being used with the new assistant usage insights feature available in Langdock.

Users can now upvote or downvote responses and leave comments, providing direct feedback that can help you improve your assistant's configuration. This interaction enhances the user experience and offers concrete suggestions for improvement in the feedback tab.

In the analytics tab, you as an editor or owner of an assistant, can access quantitative data about usage over specific timeframes in the analytics tab. The number of messages, conversations and users helps you understand user engagement and identify needs.

With these insights, assistant creators can assess performance and make informed improvements of the configuration, leading to a more effective and user-friendly assistant.

- Smaller improvements to canvas: We improved the performance and responsiveness of canvas on mobile, the streaming animation runs smoother now and the model with canvas triggers the feature more reliably.

- New file format: We added support for .eml files.

- Resize split screen: The Canvas split screen and the assistant split screen can now be resized horizontally by dragging the border in the middle.

- Increased image file size: You can now upload images up to 20MB.

Canvas

Introducing Canvas

We're excited to launch Canvas, a new feature in Langdock that enhances your writing and coding tasks. Canvas offers an interactive window where you can edit text and code directly, receive AI suggestions, and collaborate more effectively.

Highlights:

- Inline Editing: Easily make changes and get AI suggestions.

- Coding Tools: Review, and fix code seamlessly.

- Writing Enhancements: Adjust text tone and style with ease.

Canvas is now available for all Langdock users.

- Custom embedding models for Vector DB: When connecting to custom Vector DB’s, users can now configure which embedding model to use for converting the search queries.

- Added a Search endpoint for knowledge folders: Made our knowledge folder API more useful, with an easy way to search through uploaded content.

- Increased max-length on prompt-inputs to 120,000 characters.

- Scrolling stability improvements for answer generations: Streaming & scrolling is now super smoooooth…

- Added “Request access” capability for workspaces: Improving the onboarding process for new users and admins.

- Added a consistent breadcrumb navigation to all subpages.

Actions

Assistants can now talk to other software tools like Jira or Salesforce via actions.

Actions

Assistants can now perform API calls to external tools, opening up many integration possibilities with CRMs, ticket management tools, or internal APIs. Check out our Actions documentation for details, including specialized guides for Jira, Google Drive, and Salesforce.

- Quote parts of a response: With our new quote feature, you can now easily reference specific parts of previous model responses by selecting the text and clicking the quote button to include it in your next prompt.

- We renamed “data folders” to “knowledge folders”: You can upload up to 1,000 files in a knowledge folder and use the files in an assistant.

- API for knowledge folders: You can now programmatically upload files and set up daily jobs to keep your folders up to date. See our documentation.

- Support for .json, .xml, .vtt, .xls files: We added support for several new file formats to support more use cases within Langdock.

- Custom legal disclaimers: Admins can set up custom legal disclaimers for sharing assistants. See workspace settings here.

OpenAI o1 Models

We added the latest OpenAI models, o1 and o1 mini. Admins can enable them in the model settings. As a heads up, these models are thinking models but not replacements for all tasks. The o1 models are better at reasoning and complex thinking tasks, like math, data analysis, or coding, than previous models, but not at knowledge retrieval, text generation, or translation. This is because they will take a comparatively long time to start writing their answer since they are thinking in the background first. You can read more about this in our model guide.