Changelog

We're constantly working on new features and improvements. Here's what's new with Langdock.

Assistant Templates

We launched our Assistant Templates! 🎉

One of our most requested features is here: We built 24 different assistant templates based on popular use cases we’ve seen across our customers and our own team. Now, you can easily browse, use, and customize assistant templates.

Department-specific & general templates: Find assistants tailored for specific departments (e.g. Finance, Marketing or Sales) as well as general-purpose helpers.

Flexible usage: Use assistants directly or customize the templates by adapting the instructions, adding knowledge folders, or connecting your own integrations.

Built-In best practices: Each template reflects proven approaches to boost productivity and efficiency.

Check integrations: Some assistants use integrations that may not be enabled in your workspace. Make sure the required integrations are active and relevant for your use case.

Examples of available templates:

- Google Meet Meeting Transcription

- User Feedback Analyser

- Company Documentation Q&A

- Marketing Content Writer

- Langdock Integrations Builder

- Assistant Builder

We’d love your feedback and ideas for new assistant templates! We will keep on adding new templates over time.

Explore the Assistant Templates and let us know what you think!

Sync folders from integrations

You can now sync folders from your integrations directly into Langdock assistants.

Supported integrations: Access files from folders in SharePoint or Google Drive directly within Langdock, to seamlessly interact with your knowledge base. Note: The integrations need to be enabled in the workspace.

Attach synced folders to assistants: Just like attaching individual files from your integrations, you can now attach folders to assistants. For example, you can attach a folder from your department, about a customer, or about a project you’re working on to your assistant. To attach a folder, just click on the “Attach” button in your knowledge section and search for your folder.

Always up-to-date: We synchronize all your folders daily for up-to-date information.

File limit and format restrictions: For now, up to 200 files per folder are supported. Spreadsheets and images are currently not supported.

Access control: Admins can configure who can use folder sync in the workspace settings under roles.

We look forward to your feedback, which will enable us to continue refining and expanding our integrations.

Actions in Chat

We're excited to announce a major productivity boost in Langdock: Actions in Chat! 🚀

You can now access powerful features directly from the chat by simply typing @. This new shortcut allows you to quickly select four different types of advanced actions directly in your chat. It’s a faster and more seamless way to work with multiple assistants, integrations, and knowledge folders.

Here’s an overview of the different Action types in Chat:

- Assistants: Instantly call your custom assistants to help with any task, right from the chat. This allows you to pull multiple assistants into the same chat. An example could be one changelog assistant that writes feature update posts and another assistant translating them to a second language afterwards.

- Integrations: We also made it easier to quickly use different integrations in the chat, without the need for building an assistant. We provide 35 pre-built integrations with over 300 actions. The exact integrations you can use depend on your workspace settings.

- Prompt Library: Of course, you can still use your prompt templates from your library. This functionality moved from the “/” to “@” to be consistent with the other actions.

- Knowledge Folder: Attach relevant knowledge folders to your conversation for smarter, more informed responses.

Actions in chat are helpful for easily combining assistants and integrations in your chat for one-time situations. Just type @ and get what you need, right where you’re working. For specific use cases that should scale across your teams and should be used regularly, it still makes sense to build an assistant with precise instructions.

This update helps you work even faster and more efficiently in Langdock. As always, we look forward to your feedback and can’t wait to see how you use Actions in Chat!

- Improved platform performance & speed: We worked on a lot of improvements in the last days, which make Langdock faster, smoother, and more reliable with quicker load times and improved stability.

- Enhanced Outlook calendar integration: You can set up recurring events, host online meetings, and choose meeting providers with ease. Scheduling is simpler with bulk event deletion, better filtering, and smarter time suggestions.

- Integration of Microsoft Planner: Manage plans, tasks, and buckets effortlessly. View comments and automate workflows when tasks are created or completed.

- Integration of AWS Kendra: You can search AWS Kendra with natural language queries, advanced filters, and personalized results, making it easier to find the right information quickly.

GPT-5

We’re excited to announce that GPT-5 is now available to Langdock users!

OpenAI just released their GPT-5 series, their most advanced language models yet. We just added GPT-5, GPT-5 mini and GPT-5 nano to Langdock. The models are an upgrade to the previous GPT-4.1 models - GPT-5 mini already performs better than GPT-4.1 and even o3 in some domains.

With a much larger context window and faster response times, GPT-5 enables more seamless and productive workflows—whether you’re handling complex research, generating long-form content, or building advanced assistants.

The key highlights are:

- Enhanced Reasoning & Coding: Achieve better results on technical, analytical, and programming tasks.

- Longer Context: Work with extended documents and conversations without losing context.

- Faster Responses: Enjoy a noticeably quicker, more interactive response time.

We just added the models to Langdock, so you can now select the model in your chats and assistants.

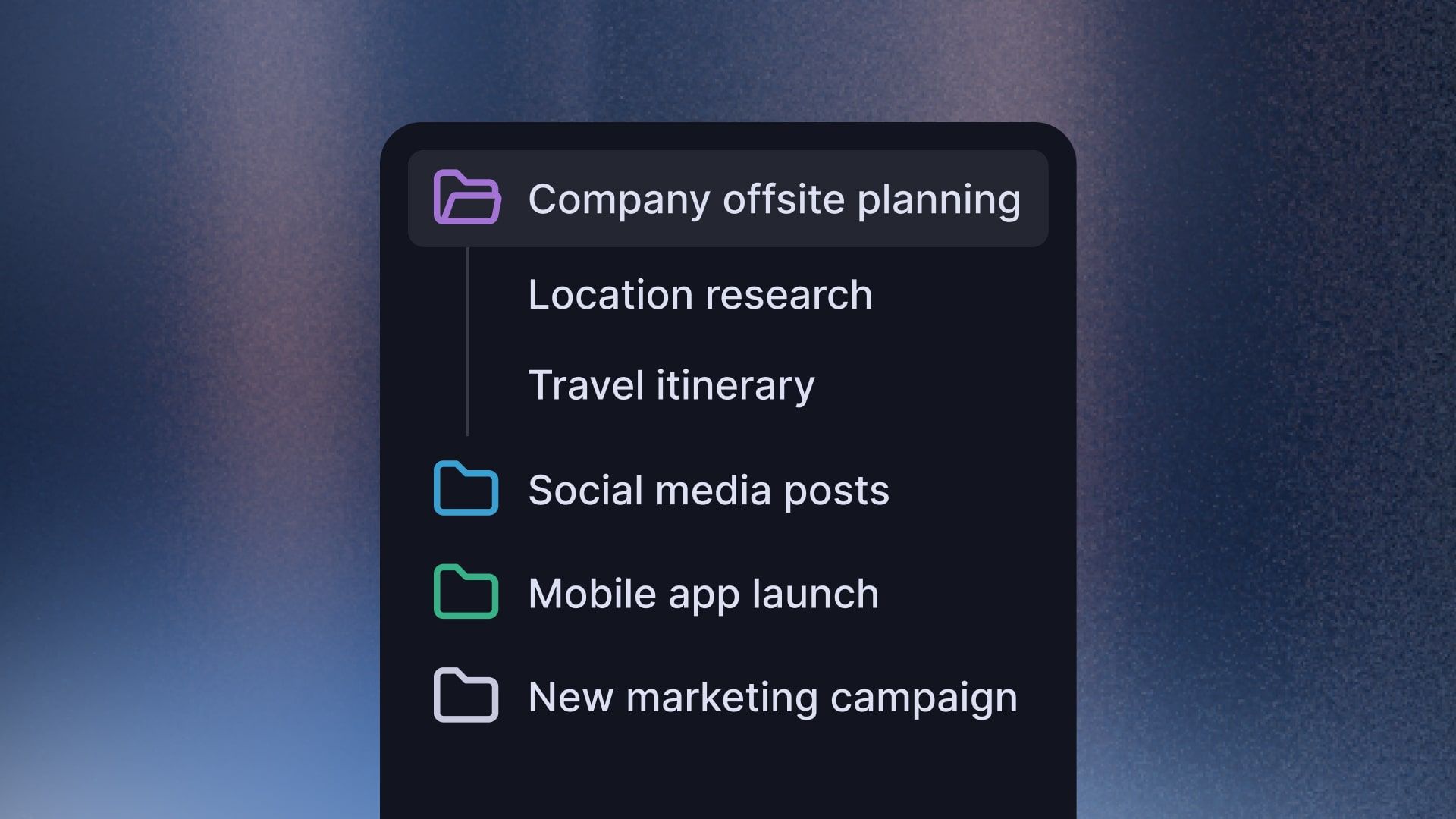

Projects

We're excited to introduce Projects, a new organizational feature that gives you more flexibility in organizing your chats and helps you work on bigger projects.

What are Projects?

Projects allow you to group chats together in a specific context. Whether you’re working on the next big marketing campaign or other project, all of your chats will stay together. To improve your workflow even further, you can attach custom instructions and files to each project.

Each project includes:

- Attached files that are accessible across all project chats without re-uploading

- Project-specific instructions that customize AI behavior for those chats

How Projects Work

- Create a project for your specific purpose

- Upload relevant documents

- Set custom instructions to guide AI behavior for this project

- Start chatting with full access to project context and files

You can create your first project from the sidebar. We'd love your feedback on how this fits into your workflow!

MCP Support

We are excited to announce that Langdock now supports the Model Context Protocol (MCP)! 🚀

What is MCP?

MCP is an open protocol that standardizes how AI applications connect to data sources and tools. Think of MCP like a USB-C port for AI applications. Just as USB-C provides a standardized way to connect your devices to various systems, MCP provides a standardized way to connect AI models to different data sources and tools.

More specifically, you can connect your assistants to remote MCP servers over the internet. This offers more integration possibilities with different data sources in addition to existing integrations.

Key Features

- Flexible Authentication: Works with both simple API key authentication and secure OAuth connections for services that require it.

- Automatic Tool Discovery: MCP connections automatically find what tools are available from each server.

- Seamless Integration: MCP tools work naturally within your assistant conversations (can require confirmation and display inputs and outputs).

- Continuous Permission Management: MCP integrations work within our existing integration framework, so you can manage permissions, sharing, and usage just like your other Langdock integrations.

- Trusted Compatibility: We verified compatibility with popular MCP servers including Microsoft Docs, Cloudflare Docs, and GitHub. Generally, we support all open MCP servers (without authentication) and MCP servers with API-Authentication.

How to Get Started with MCP

You can set up MCP integrations, through the "Add integration" button in the integration menu. Then, you can select if you want to build an integration with JavaScript (as before) or connect a remote MCP server. To set up MCP integrations, users need permission to create custom integrations.

Detailed instructions can be found on our docs:

https://docs.langdock.com/resources/integrations/mcp

Note: MCP is still in a very early stage of development. We strongly recommend thoroughly testing any integrations you build using MCP before relying on them in production.

- Answer generation continues in the background: The response generation continues when users navigate away from chat.

- Optimize assistant instructions with AI: You can now optimize your assistant instruction with AI or dictate your prompt by clicking on the buttons in the instruction field.

- Optimized Assistant configuration: We switched the places of the input type and instruction fields in the Assistant builder to improve UX

- Dismissible notifications in the top right corner: You can now click notifications in the bottom right corner away.

- Improved CSV and Excel content preview: We improved the preview and handling of spreadsheet files when uploading them to assistants and chats.

- Enhanced model description cards: The model selector now includes precise capabilities and short descriptions, making it easier to select models with reasoning, web search, and data analysis capabilities.

- Improved assistant form builder: Assistant forms now support up to 15 fields.

- Custom model test button: Custom models now can be tested in the workspace settings with a test button.

- Attachments without text prompts: You can now submit files directly without a prompt.

- Context Actions: Previously, you had to manually add dependent actions like fetching a channel ID before sending a Slack Message to a Group Chat so the assistant could perform this task correctly. This often meant trial and error to figure out why the assistant wasn’t working as expected. Now, for actions that require additional information like IDs, the action by default includes the necessary dependent actions. You will see this denoted by a small link icon on the action tile and when you open the action by clicking the small toggle.

New in Langdock

Deep Research

We're excited to launch Deep Research, a new chat feature designed for comprehensive, long-form research reports that require in-depth analysis across multiple sources.

What is Deep Research?

Deep Research is built for complex research projects that demand thorough investigation rather than quick answers. It conducts multiple strategic web searches, examines findings from different sources, and synthesizes everything into a well-structured report.

When does it make sense to use Deep Research?

Deep Research is particularly powerful for background research, exploring industry trends, market analysis, competitive analysis, academic research, strategic planning, and any task requiring comprehensive information gathering from multiple online sources. The resulting report with citations can be downloaded as a PDF, saving you hours of manual research and compilation.

How does it work?

Deep Research intelligently plans its approach to gather insights from multiple angles. You can watch the search activity in real-time and see sources as they are added. No matter which model you select in the chat, Deep research always uses pre-configured models to ensure the best possible quality. There is currently a usage limit of 15 searches per user per month.

Deep Research is now available across all workspaces! 🚀

On another note: Microsoft Azure is currently experiencing some speed issues with GPT-4.1. While we still recommend it as the default model, you may also try GPT-4.1 mini as a faster alternative.

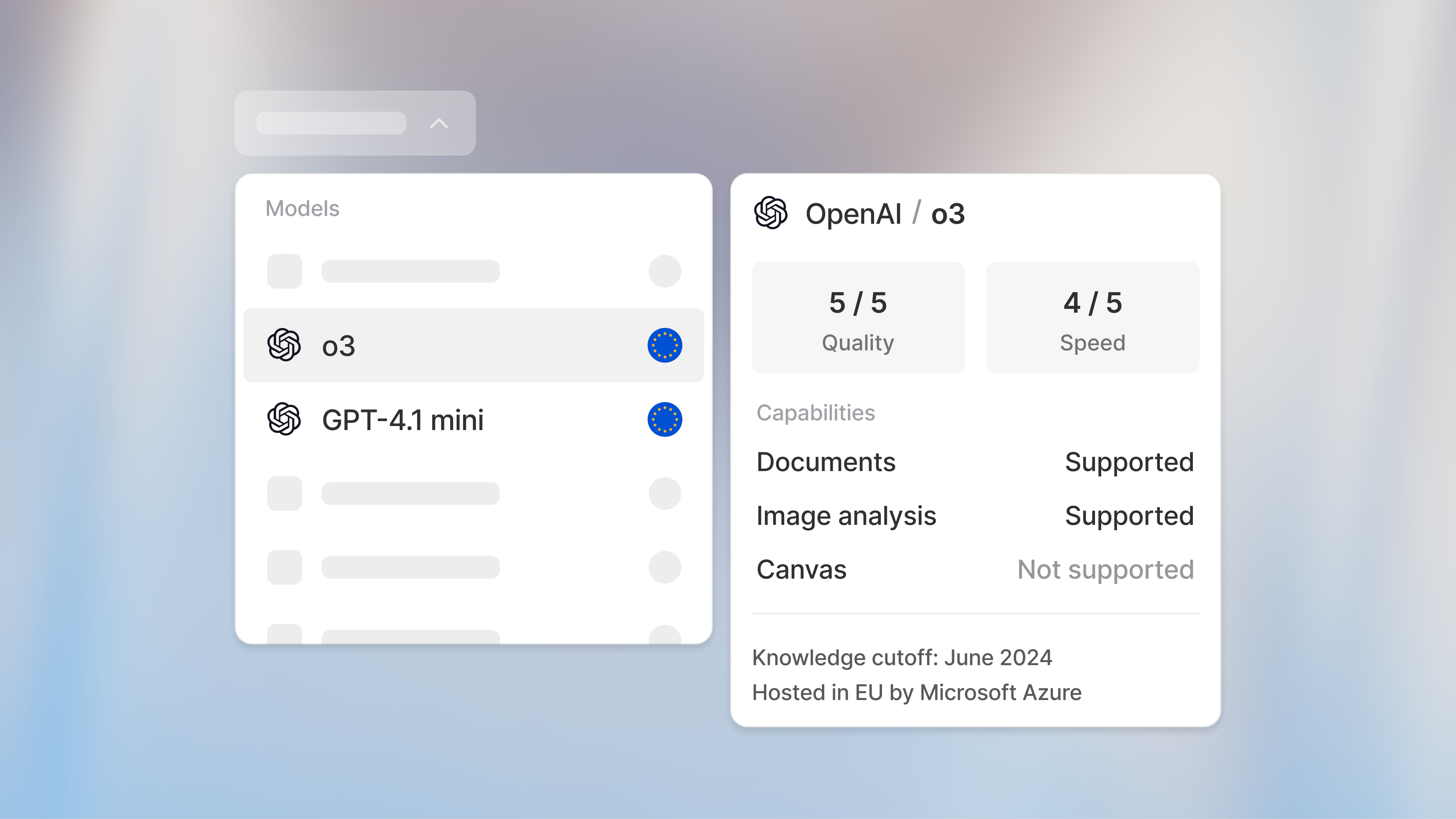

New models available!

o3 and GPT-4.1 mini

We are excited to announce that o3 and GPT-4.1 mini are now available in Langdock! 🚀

o3 is OpenAI's most powerful reasoning model that sets new standards across coding, math, science, and visual reasoning tasks. It excels at technical writing, instruction-following, and tackling complex multi-step problems. o3 is perfect for strategy, research, and advanced coding tasks that demand sophisticated problem-solving capabilities.

GPT-4.1 mini is the smaller, faster version of GPT-4.1, designed for everyday tasks with significantly faster responses. This efficient model delivers performance competitive with GPT-4o while reducing latency by nearly half. GPT-4.1 Mini excels at high-volume tasks, real-time applications, and rapid content generation. This model was previously available as a global deployment and is now available in the EU.

Both models are now live. Choose o3 for advanced reasoning and intelligence, or GPT-4.1 mini for speed and efficiency!

Important Notice: We will deprecate GPT-4.5, o1 (Preview), o1 mini and Gemini 1.5 Pro, on the 11th of July. These models will no longer be available on Langdock from that date forward. We recommend switching to the newer versions of these models.

Updated Canvas

We are excited to announce that Canvas is now out of Beta and available to all Langdock users! 🎉

Canvas is our interactive editing environment to help you with your writing and coding tasks. With Canvas, you can edit text and code directly while receiving AI suggestions.

This release comes with a few significant updates based on your feedback since the launch of the beta. Thank you to everyone who contributed their thoughts and suggestions to help us improve the Canvas feature.

Highlights of this release:

- Streamlined Interface: We have decluttered the interface to make your workflow smoother and more intuitive.

- Integrated Coding Tools: You can now generate and run code in Canvas directly through the integrated terminal.

- Download as File: You can now download your canvas as PDF, word and markdown document.

- Simplified Model Selection: There is no longer a need to select a separate Canvas model; everything works seamlessly with the main models you already use every day.

You can ask the model to use Canvas or alternatively, you can toggle the Canvas mode on by clicking the button in the chat field.

We aim to continually improve Canvas and look forward to seeing how you use it to power your work.

- Improved “New chat” and “Search” buttons: We improved the design of the buttons in the navbar to open a new chat and open the command bar.

- Action permissions: Admins can now define access permissions for individual actions by giving access to individual users and/or groups. You can go to the integration you want to manage and then find a new option to manage the actions.

- New integrations: New integrations: We added integrations to Monday and Google Meet (to retrieve transcripts).

- File support: The file formats .dotx, .rtf, .kml, .gml, .dxf, .gpx, .shp, .shx, .dbf, and .prj are now supported.

- Improved document uploads: We improved the uploading behavior of files, especially for larger amounts of files.

- Mermaid diagrams: Mermaid flowcharts can now also be saved as images.

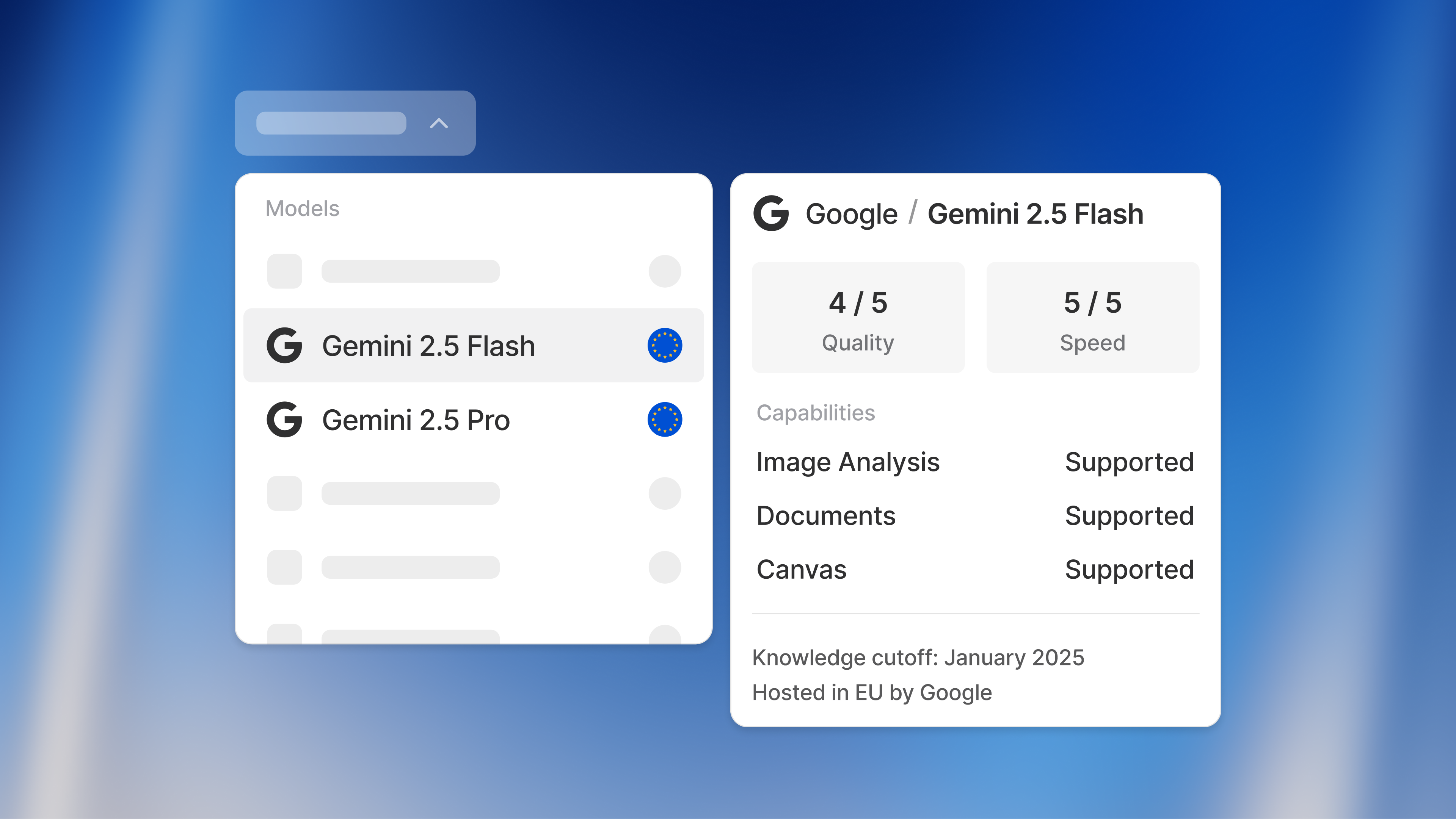

New model available!

Gemini 2.5 Flash and Gemini 2.5 Pro

We are excited to announce that Gemini 2.5 Flash and Gemini 2.5 Pro are now available in Langdock! 🚀

Gemini 2.5 Flash is the faster and more efficient model of the 2.5 version, designed for real-time, high-volume tasks. It delivers fast responses, supports up to 1 million context tokens, and is ideal for instant writing, summarization, and Q&A;

Perfect for Assistants and Chat where speed and efficiency matter most.

Gemini 2.5 Pro is Google’s most advanced model for complex reasoning, coding, and multimodal tasks. Comparable to Claude's "Sonnet" and OpenAI’s “o” models, Gemini 2.5 Pro is ideal for strategy, research, and coding tasks requiring advanced problem solving.

Both models are now live - choose Flash for speed and efficiency, or Pro for intelligence and depth.

Workspace Admins can activate these models in the Workspace Settings under the "Models" tab.

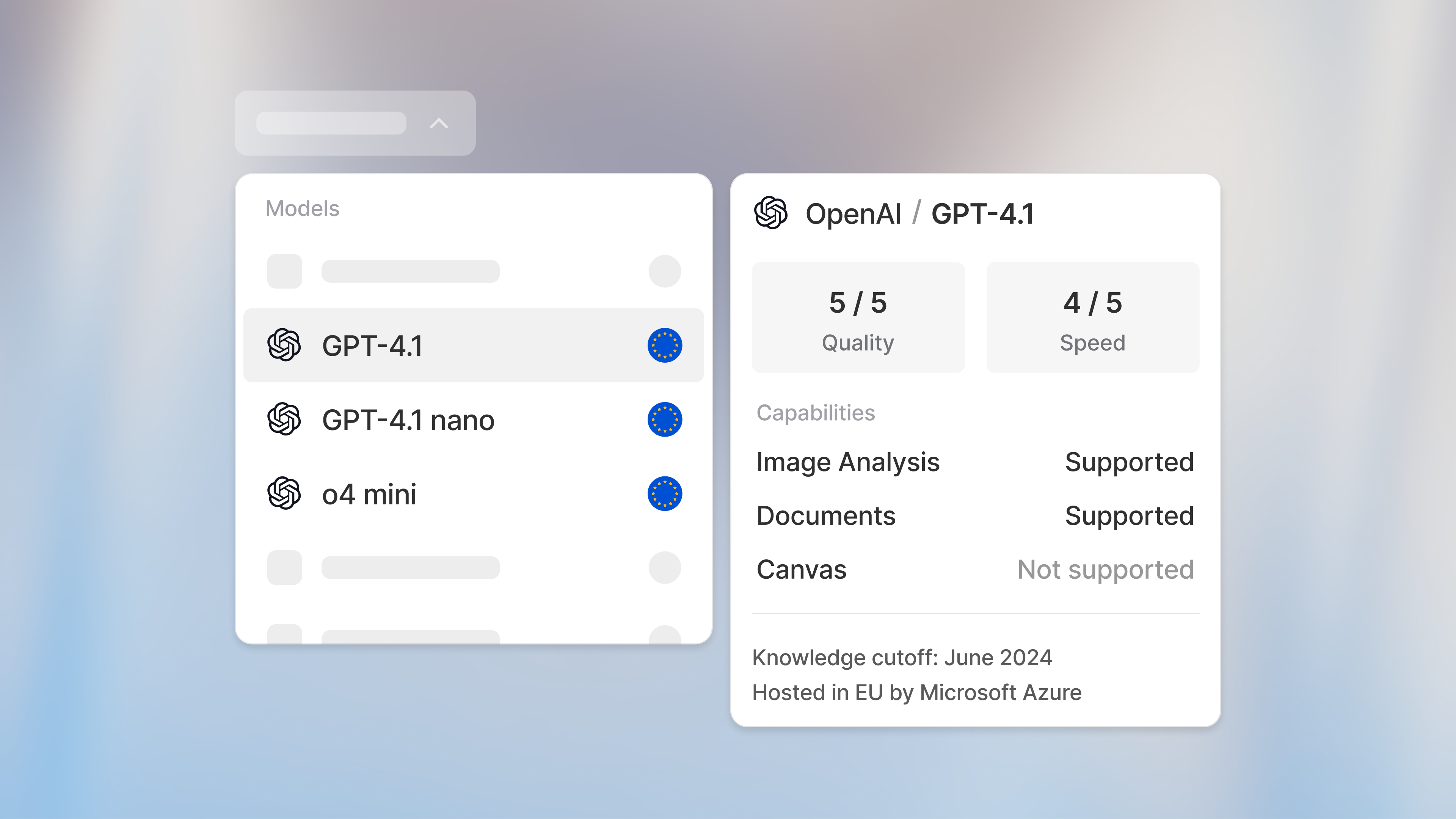

GPT-4.1 and o4 Mini

We are excited to announce that GPT-4.1 and o4 Mini are now hosted in the EU and available in Langdock. 🙌

GPT-4.1 is the latest version of the GPT-4 series, performing better on speed and quality than previous version. We set GPT-4.1 the default model for all new workspaces on Langdock. Additionally, all workspaces previously set to GPT-4o, GPT-4o Mini, or GPT-4 have been migrated to GPT-4.1 as their default model.

We are also pleased to introduce o4 Mini as a new model option on Langdock. o4 Mini is designed for fast, efficient reasoning and excels at handling complex instructions, coding, and strategic tasks. Similar to o3 Mini, it offers a strong balance between speed and accuracy.

- Sunsetting of GPT-4: As shared in the last changelog, by the end of this week, GPT-4 will be fully deprecated on Langdock, following the discontinuation of support by Microsoft Azure. Assistants previously using GPT-4 will now automatically use the workspace default model or GPT-4.1.

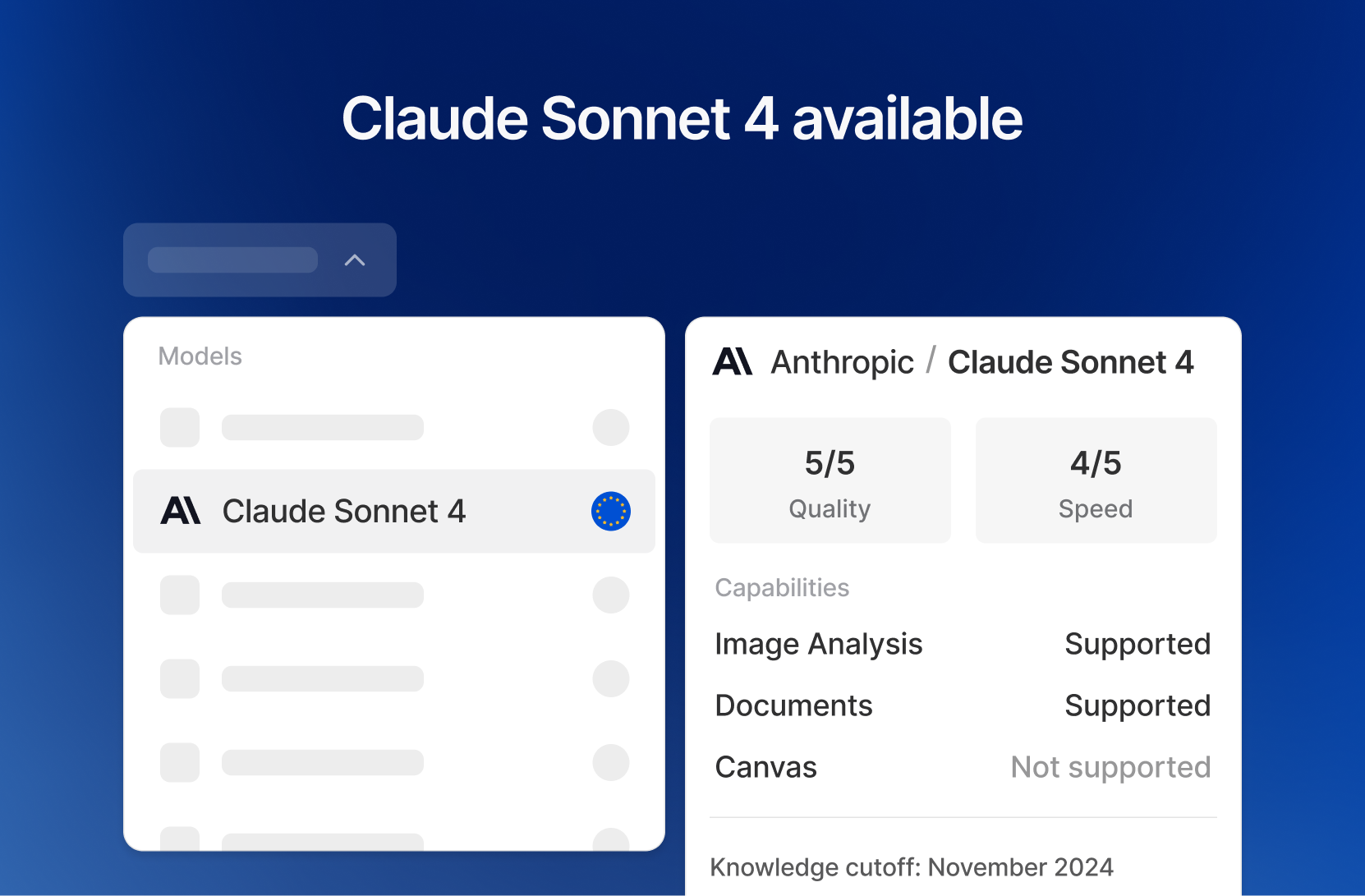

Claude Sonnet 4

We've integrated the new Claude version into Langdock! 🚀

Claude Sonnet 4 is the successor to Sonnet 3.7, a model favored by many of our users for text generation, coding and problem-solving tasks.

Like with Sonnet 3.7, Sonnet 4 offers the option to use reasoning for complex tasks. This is why we have added the modes as two separate models: Claude Sonnet 4 (Preview) and Claude 4 (Reasoning Preview).

The standout features of Claude Sonnet 4 include:

- Text generation: Like previous Sonnet versions, Claude Sonnet 4 maintains its strength in producing natural, human-like text for writing tasks including emails, translations, and content creation.

- Enhanced coding performance: Sonnet 4 excels in coding tasks, from generating solutions to navigating complex codebases with near-zero errors.

- Improved steerability: The model offers greater control over its behavior, allowing you to tailor its responses to your specific needs.

- Optimized efficiency: Designed to balance capability and practicality, Sonnet 4 is ideal for assistants requiring both speed and depth.

- Sunsetting of GPT-4 Turbo: On June 8, GPT-4 Turbo in Langdock will be deprecated. Microsoft Azure is discontinuing support for this model, and the newer GPT-4o and GPT-4.1 models offer improved performance and speed. Assistants using GPT-4 Turbo will be set to the workspace default model

- Export assistant feedback: Assistant editors can now export the feedback from the usage insights as a CSV.

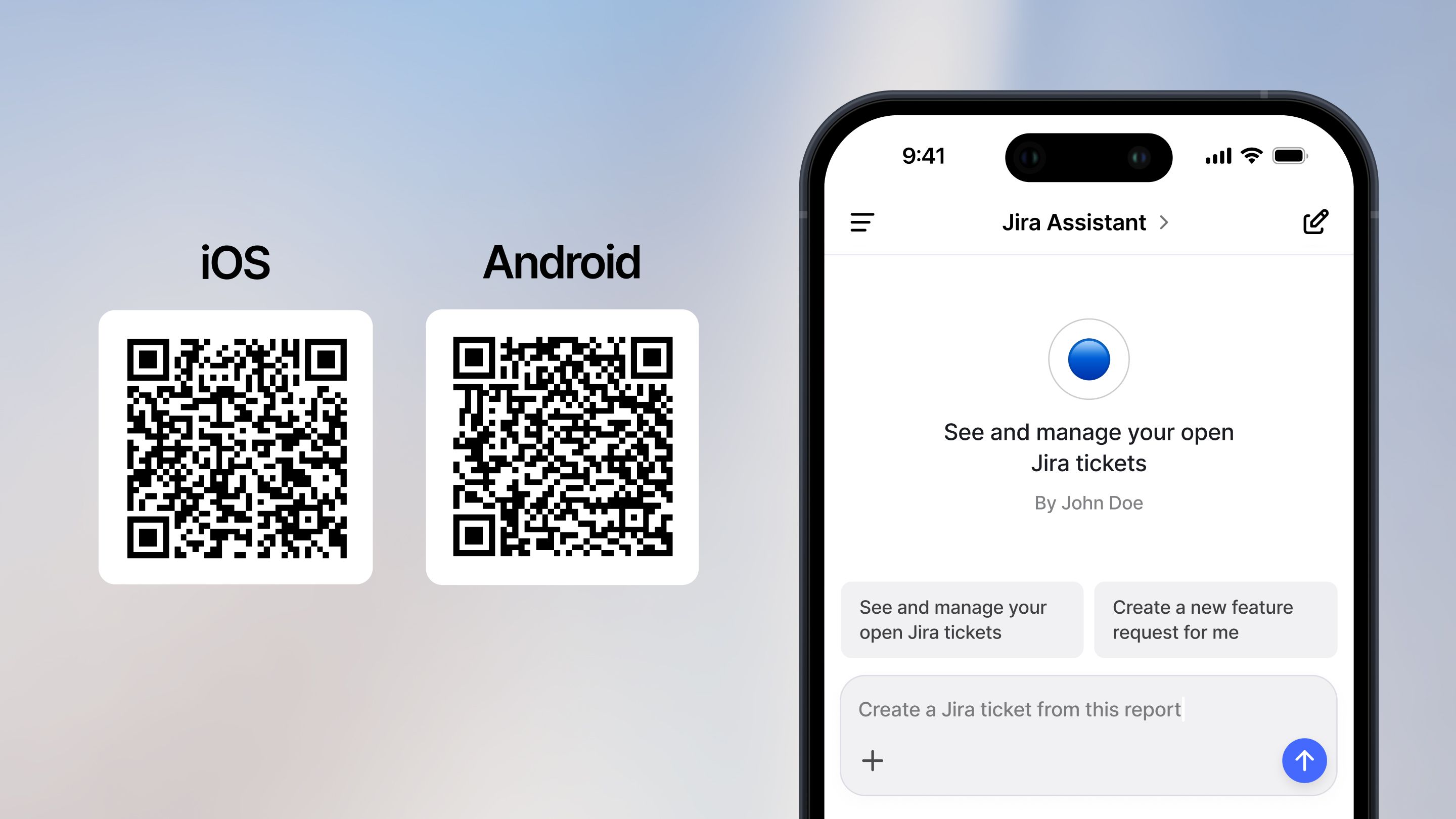

Android and iOS App

Today, we are excited to launch the Langdock mobile app! You can now download Langdock as a dedicated Android or iOS app and use AI from your phone.

You can now use Langdock wherever you are. You can choose between your models, use the chat and your assistants.

Additionally, we added one highly requested new functionality: You can now use your voice as input, Langdock will transcribe it, and submit it as a prompt. Look out for the microphone icon in the prompt input field!

To download the app, go to the Apple App Store here or the Google Play Store here.

- Voice input in the browser: Next to having voice input in the mobile app, you can now also use this functionality when you use Langdock in the browser.

- Assistant management: Admins now have an improved way of managing assistants in the workspace. Admins can verify certain assistants to highlight them in the assistant list and re-assign the ownership of an assistant if the previous owner left the company. See workspace settings.

- New integrations: We added new integrations including Salesforce, GitHub, Slack, Airtable, Zendesk, Snowflake and DeepL.

- Increased character limit for text files: We increased the limit for text files from 2M to 4M characters.

- Admin mode for integrations: Admins can now test all integrations before they enable them for the entire workspace. Access to integrations can be managed in the workspace settings.

New GPT-4o version

We've integrated a new version of GPT-4o into our platform! 🚀

This powerful version delivers improved response quality and faster generation times.

The model was previously available as "GPT-4o (latest)". We merged the two models now and the normal "GPT-4o" is the newer model.

The GPT-4o image generation capability announced a few days ago is not available in a version hosted on EU servers yet. We will add it as soon as it becomes available in the EU.

We've also made several improvements to enhance your experience:

- Text formatting preservation: When manually copying parts of responses in chat and assistants the formatting is now being kept, as with the copy message button

- Native Mermaid diagram support: Create your own mermaid diagrams directly in our chat. These can be Flowcharts, Sequence diagrams and many more

- o3 mini via API: OpenAI's o3 mini reasoning model is now available via our API

- Table formatting: We improved the formatting of tables in the chat. This includes the appearance, the copying behavior and the ability to download a CSV of generated tables

New Integrations

We launched our new integrations! It’s much easier to integrate other software tools into Langdock now to retrieve data and take actions. The update consists of three main parts:

- 20+ native integrations are now available in Langdock

- An easier way to build integrations for your own tools

- Improvements to existing integrations and knowledge folders

New integrations and actions

We made integrating external tools into your assistants easier and pre-built many new integrations for the tools our customers use. For example, you can now use the following integrations: Jira, HubSpot, Google Sheets, Excel, Outlook, Google Calendar, and Google Mail.

You can now easily add actions that your assistants can perform. Example actions are:

- Write email drafts and send them to Google Mail or Outlook

- Create or update deals in HubSpot

- Write and update tickets in Jira

- Add an entry to a Google Sheet or an Excel Sheet

- Send a message in a Microsoft Teams chat

- And many more...

Here are more details on how to use them. If you’re missing an integration or specific action, please let us know!

Integrate your own tools

The Langdock team will build integrations to all standard software tools in the coming weeks. If we don’t have an integration (yet), or you want to integrate an internal tool, you can build your own integrations.

We deprecated the previous OpenAPI-schema-based integrations in favor of a simpler integration builder that also allows you to write custom JavaScript to cover all kinds of edge cases. The integrations/actions now live outside of assistants, so you can share and reuse them in multiple assistants. You can follow this guide to set up your own REST API based integrations.

Improvements to existing integrations and knowledge sources

We also improved the interface and experience of existing integrations. Here are the most significant changes:

- When you attach a document from an integration (e.g., SharePoint or Google Drive) as assistant knowledge, we now refresh the content of the document every 24 hours. This ensures that you always work with the latest version of the document in your Langdock assistant. You can also manually refresh a document at any time.

- Knowledge folders can now be shared with users, groups, and the workspace (similar to assistants). The knowledge folders moved from the account settings into the integrations menu to make them more visible.

- If you already built custom actions in an assistant, they are still available. We marked them as read-only, and they will be deprecated on April 30th. We recommend migrating your existing actions to our new, improved actions. If your action is not available out of the box yet, let us know if you need help migrating it.

- Vector databases were also moved from individual assistants to the integrations menu to make it easier to reuse connections. Assistants with existing vector databases were migrated accordingly to ensure they worked as before.

Additional information for workspace admins:

- By default, all integrations are enabled. You can configure which integrations should be enabled in your workspace here.

- Workspace-wide integrations (Google Drive & Confluence via service accounts) are now deprecated in favor of the new integrations. Please let your users know so they can configure the integrations manually. The functionalities and permissions are covered completely by the new integrations.

- The permissions per user role have changed to reflect the new integration framework: The permissions “Connect Vector Databases” and “Connect Actions” were deprecated, and the new permissions are “Share knowledge folder” and “Create integrations.”

This new integration framework will allow for many more use cases in Langdock, and it’s just the beginning. In the coming weeks, we’ll add many more functionalities to work with all kinds of data in Langdock. Stay tuned!